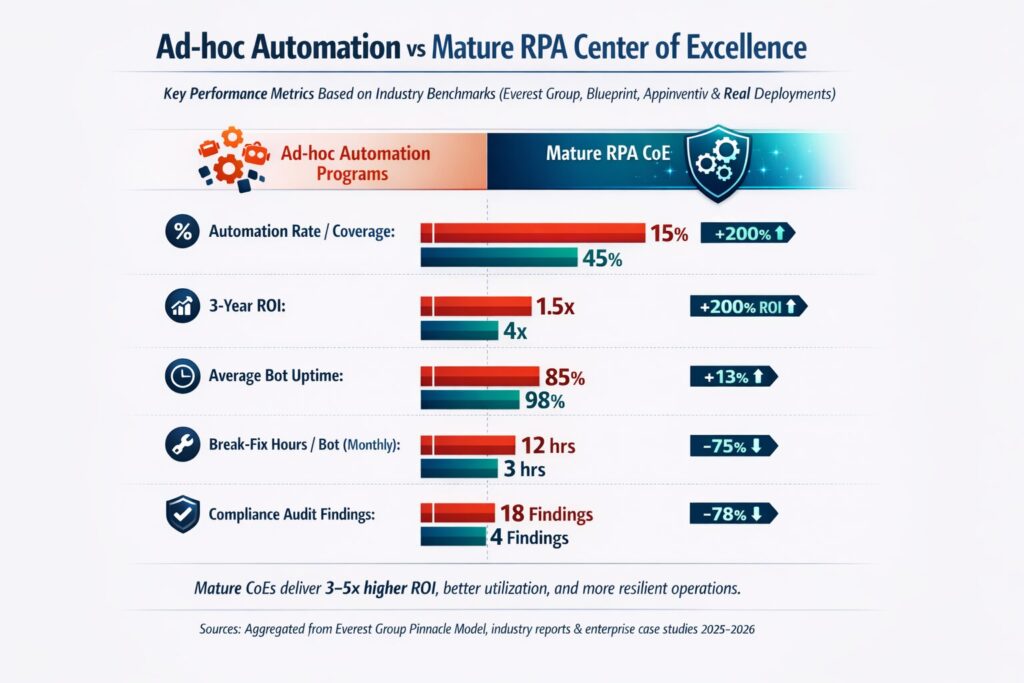

Analyst studies show that organizations with highly mature RPA Centers of Excellence achieve significantly higher automation rates and materially better returns than those running disconnected, ad‑hoc bots. One Everest Group benchmark cited by industry practitioners found that companies with advanced RPA CoEs see automation rates about 72 percent higher and three‑year ROI over 50 percent better than peers without a strong CoE. Broader RPA research also shows that typical first‑year ROI from automation ranges from 30 to 200 percent, with long‑term returns reaching 300 percent when programs scale effectively. In practice, the difference between middling results and compounding value usually comes down to whether automation is shaped by a deliberate CoE or scattered across teams.

In 2026, that gap is widening because automation is no longer just a few task bots in finance or HR. Hyperautomation strategies now combine RPA with process mining, machine learning, generative AI, and orchestration platforms to automate entire end‑to‑end processes. At the same time, agentic AI and specialized AI agents are beginning to diagnose, optimize, and orchestrate workflows autonomously rather than simply following fixed scripts. This shift makes governance, security, and architectural discipline non‑negotiable when you want to grow from a handful of bots to hundreds of digital workers embedded deep in your core systems.

This guide gives a complete, battle‑tested framework for building a modern RPA CoE designed for 2026 realities rather than 2018 pilot programs. It combines best practices from vendor playbooks with hard lessons from real implementations in banking, healthcare, manufacturing, insurance, and logistics where automation has already moved into enterprise scale. Readers will find a seven‑step framework, example team structures, checklists, template ideas, and anonymized case insights showing what worked, what failed, and how to avoid common traps.

An RPA Center of Excellence is a dedicated, centralized function that sets strategy, standards, and governance for automation and then supports or delivers solutions across the organization. Leading definitions emphasize that the CoE is accountable for managing, governing, and expanding automation, not just building scripts. Typical responsibilities include defining an automation roadmap, prioritizing processes, enforcing development standards, overseeing security and compliance, running enablement programs, and tracking value realization.

This article focuses on CoEs for enterprise‑scale RPA and hyperautomation: environments where you either already run or expect to run dozens to hundreds of bots across multiple business units and systems. It assumes cross‑functional sponsorship, enterprise risk and compliance obligations, and a roadmap that extends toward AI‑driven orchestration over the next few years.

What Is an RPA CoE and Why You Need One in 2026

Centralized CoE vs. Decentralized Automation

In decentralized automation, individual teams buy tools, build bots, and run them on their own infrastructure with limited coordination. This can deliver quick wins but often creates fragmented standards, fragile scripts, audit gaps, and duplicated effort as different groups solve the same problems in different ways. By contrast, an RPA CoE is a centralized or federated group that oversees automation strategy, standards, and lifecycle management across the enterprise, often bringing together IT, process owners, and citizen developers under a shared operating model.

Consulting and vendor guidance is clear that CoEs are now a core ingredient for scaling RPA beyond pilots. Blue Prism, Automation Anywhere, and other platform providers position the CoE as the unit that selects processes, enforces their robotic operating model, and ensures bots remain reliable assets rather than fragile scripts scattered across the landscape. Practitioners also describe the CoE as a lightweight but authoritative automation services team that provides standards, design review, development support, and monitoring to business units.

Evolution to Hyperautomation CoE in 2026

Since Gartner popularized the term hyperautomation, many enterprises have evolved their RPA CoEs into broader automation or intelligent automation CoEs that also cover AI, process mining, intelligent document processing, and orchestration. Hyperautomation involves orchestrating a stack of technologies to automate full processes end‑to‑end, not just isolated tasks, and relies heavily on shared governance and architectural patterns. Recent process‑excellence research shows that agentic AI and AI agents are becoming part of this stack, with around 40 percent of surveyed organizations already using agentic AI for transformation and a majority planning investments in the next year.

In this context, the CoE becomes the logical home for coordinating AI‑enhanced automations, setting guardrails for autonomous agents, and integrating process mining insights into the automation pipeline. Manufacturing and logistics CoE case studies now describe the CoE as an operating model that governs autonomous digital labor rather than a team that simply builds bots. This broader scope is why many organizations now refer to Automation CoEs or Hyperautomation CoEs, while still retaining RPA as a core capability.

Key Benefits of an RPA CoE

Research and case studies highlight several recurring benefits of a well‑run automation CoE.

-

Higher ROI and faster payback: Companies with mature RPA CoEs report substantially higher automation rates and three‑year ROI, with Everest data indicating around 51 percent higher ROI and more than 70 percent higher automation penetration compared with less mature peers. Case studies of Automation CoEs in healthcare show returns of 6.7 times with multi‑million‑dollar savings when governance and prioritization are centralized. Broader RPA benchmarks still find that typical first‑year ROI ranges from 30 to 200 percent, but long‑term returns approach 300 percent where programs scale under structured oversight.

-

Standardized governance and compliance: Banks, insurers, and healthcare providers use CoEs to align automation with IT controls, audit requirements, and regulatory obligations from the start, avoiding the retrofit work that comes with separate departmental bots.

-

Reusability of components: CoEs curate reusable assets such as common integrations, templates, and libraries so new automations build on proven components instead of reinventing patterns, which shortens delivery time and improves reliability.

-

Better change management and upskilling: Central teams typically run training, develop internal playbooks, and build a community of practice, which research shows is important for sustaining performance and expanding RPA use safely.

-

Reduced shadow IT and failed bots: Studies of RPA scaling failures often trace problems back to siloed ownership and the absence of shared governance, whereas CoEs provide a joint IT–business forum for design review, dependency management, and production monitoring.

The 7‑Step Framework to Build a Successful RPA CoE

Step 1: Secure Executive Sponsorship and Define Strategic Objectives

Vendor and consulting sources repeatedly emphasize that committed executive sponsorship is the first prerequisite for a sustainable CoE. Sponsors, often at the CFO, COO, or CIO level, provide the budget, air cover, and decision weight needed to prioritize automation across units and enforce common standards. They also help avoid the pattern where RPA begins as a small IT or operations experiment and then stalls because no one owns cross‑functional value realization.

Best practice is to define a clear automation vision and 2–3 strategic objectives before formalizing the CoE. Common objectives include cost savings, capacity release for growth, improved compliance quality, and faster customer response times, and they should be translated into quantifiable targets such as hours saved, error reductions, and payback windows. Some organizations also define non‑financial goals such as employee experience and data quality improvements, which are especially relevant when pairing RPA with AI and process mining.

Tools and techniques:

-

Strategy workshops with key stakeholders from IT, risk, and business functions to align on automation themes and constraints.

-

A lightweight business case model that estimates potential hours saved and cost impact per domain, using industry ranges for RPA ROI and cost reductions.

-

A CoE charter outline that captures vision, scope, decision rights, and funding model, often borrowing from templates shared in platform or consulting guidance.

Lesson from Deployment 1 (Global Bank):

In a European banking group described in a case study on RPA governance, automation started in isolated units and quickly ran into conflicts with risk, audit, and IT operations. The bank later created a central governance structure with explicit mandates for process selection, risk assessments, and technology standards, which allowed it to scale automation while maintaining regulatory compliance. The main lesson was that executive‑level alignment and clear governance constructs are needed early; otherwise, each new bot triggers separate debates about risk and ownership.

Action checklist:

-

Identify one senior executive as the named sponsor with visible accountability for automation outcomes.

-

Agree on 2–3 strategic objectives and translate them into measurable KPIs and time frames.

-

Draft a one‑page CoE vision and mandate to socialize with leadership.

-

Decide upfront whether the CoE will be funded centrally, via chargeback, or a hybrid model.

Common pitfalls to avoid:

-

Treating RPA as a side project without C‑level oversight, which makes it easy to cut when budgets tighten.

-

Framing goals purely as cost cutting, which can undermine support from business leaders and employees.

-

Leaving risk and compliance leaders out of early discussions, which leads to rework when controls are enforced later.

Step 2: Design the CoE Operating Model and Governance Structure

After sponsorship and objectives are clear, the CoE needs an operating model that defines decision‑making, engagement with business units, and lifecycle oversight. Common patterns include centralized CoEs that own end‑to‑end delivery, federated models where business unit teams build automations under CoE standards, and hybrids that mix central platform governance with local delivery pods.

Governance frameworks normally cover process intake, assessment, design review, deployment approvals, change management, and incident handling. Research on RPA governance stresses the importance of shared ownership between IT and business to avoid silos and to ensure both technical robustness and business relevance. For hyperautomation CoEs, governance also spans AI model usage, data access policies, ethics considerations, and boundaries around autonomous decision‑making.

Best practices and tools:

-

Create a RACI (responsible, accountable, consulted, informed) matrix for the automation lifecycle across CoE, IT, and business units.

-

Establish an automation steering committee that meets regularly to review pipeline, risks, and performance against KPIs.

-

Use standardized intake and prioritization forms so that all candidate processes are evaluated consistently on volume, complexity, benefit, and risk.

Lesson from Deployment 2 (Healthcare Provider):

A US healthcare organization described in a scaling‑RPA case study established an Automation Center of Excellence to coordinate RPA across revenue cycle, clinical administration, and shared services. The CoE introduced a structured intake process and collaborated closely with compliance and clinical leaders, resulting in approximately 3 million dollars in savings and a 6.7 times ROI while meeting strict regulatory requirements. The lesson was that clear governance and trusted relationships with business and compliance leaders allow RPA to move from opportunistic scripts to a strategic lever in regulated environments.

Action checklist:

-

Decide on centralized, federated, or hybrid CoE model and document how teams will work together.

-

Define a standard automation lifecycle with gates for design review, testing, and deployment.

-

Set up a steering committee with representation from IT, major business units, and risk or compliance.

-

Document escalation paths for production incidents and define service‑level expectations.

Common pitfalls to avoid:

-

Focusing governance only on technology and ignoring process ownership and organizational change.

-

Over‑engineering governance into a bottleneck that slows every request and drives shadow automation.

-

Failing to adapt the operating model as automation shifts from simple tasks to AI‑assisted decision flows and multi‑agent orchestration.

Step 3: Build the Right Team Structure and Roles

A CoE is fundamentally a team of people with complementary skills, not just a set of tools. Guidance from consulting firms and vendors highlights roles such as CoE head or automation director, solution architects, RPA developers, business analysts, governance or risk leads, and change‑management specialists. In 2026, many CoEs also include AI specialists, process‑mining analysts, and enablement leads for citizen developers.

Evidence from scaling case studies shows that teams with both deep technical skills and strong process or domain understanding perform better than purely technical groups. For example, a consulting‑led CoE engagement described how establishing a cross‑functional automation CoE with clear roles in architecture, QA, KPI tracking, and community building enabled an enterprise to operationalize RPA at scale rather than remaining in experiment mode.

Best practices and tools:

-

Define core roles such as CoE head, solution architect, lead developer, business analyst, platform engineer, and governance lead, even if some are part‑time in early stages.

-

Create career paths and training plans for developers, analysts, and citizen developers that cover both RPA tooling and adjacent skills like scripting, data analysis, and prompt design for AI agents.

-

Use a skills matrix to map current capabilities against required competencies in RPA, AI integration, process mining, security, and change management.

Lesson from Deployment 3 (Manufacturing Company):

A manufacturing automation CoE described in an automotive supplier case study had grown to more than 300 bots with solid ROI, but leaders realized that their CoE functioned mainly as a delivery team rather than as an operating model steward. As the company began experimenting with agentic automation, it found that skills in systems thinking, domain expertise, and data literacy were more critical than deep specialization in a single platform, prompting a redesign of roles and hiring priorities. The lesson was that as automation matures, CoEs must rebalance from pure development capacity toward architecture, governance, and business value stewardship.

Action checklist:

-

Map essential CoE roles and identify who will fill each position in the first 12–18 months.

-

Define required skills for each role, including RPA tooling, scripting, testing, security, and business knowledge.

-

Plan how to add AI, process‑mining, and agent‑orchestration skills over the next two to three years.

-

Establish time allocation and KPIs for each role so that responsibilities go beyond building bots.

Common pitfalls to avoid:

-

Staffing the CoE solely with technologists and underinvesting in process analysts and change specialists.

-

Treating RPA development as a one‑off project role without considering long‑term maintenance and evolution needs.

-

Ignoring new skill needs around prompt engineering, AI governance, and cross‑platform orchestration as agentic AI becomes part of the landscape.

Step 4: Select Technology Stack and Establish Standards

RPA CoEs in 2026 typically oversee more than one tool, combining core RPA platforms with intelligent document processing, process mining, and AI services. Hyperautomation guidance stresses that value comes from orchestrating a stack of technologies, not just selecting a single best tool, and that CoEs should remain as platform agnostic as practical to avoid locking themselves into sub‑optimal designs. Manufacturing and CPG CoE case studies similarly describe how platform‑agnostic CoEs can better handle complex, legacy environments and evolving agent architectures.

Standards are as important as tools. Leading CoEs define development guidelines, naming conventions, logging patterns, security baselines, and code review practices to reduce break‑fix cycles and bot downtime. Metrics from Blueprint show that bots with frequent break‑fix cycles and low uptime directly erode ROI through lost business value and manual recovery work, making design and monitoring standards key levers.

Best practices and tools:

-

Select an RPA platform (or a small number of platforms) that align with enterprise architecture, security posture, and talent availability.

-

Add process‑mining tools such as Celonis or UiPath Process Mining to discover bottlenecks and build a data‑driven automation pipeline.

-

Define standards for credential handling, exception management, logging, and alerting so operations teams can support bots like other production systems.

Lesson from Deployment 4 (Insurance Firm with Process Mining):

A life‑insurance company described in a case study worked with a center of excellence and prioritization committee to identify RPA projects and used process‑mining tools to understand actual process variants and volumes. This combination helped the insurer target high‑impact cases such as claims and payment‑notification processes, ultimately lowering costs and improving customer satisfaction while expecting RPA to pay for itself within a year. The key lesson was that coupling CoE governance with process mining leads to a more fact‑based pipeline and avoids automating low‑value or poorly understood processes.

Action checklist:

-

Decide which platforms your CoE will support for RPA, document processing, and orchestration.

-

Document non‑negotiable technical standards for security, logging, and exception handling.

-

Implement environment management guidelines for development, test, and production.

-

Create reference architectures for common patterns such as attended bots, unattended batch bots, and AI‑assisted decision flows.

Common pitfalls to avoid:

-

Selecting tools purely on license cost without considering integration fit, skills, and roadmap alignment.

-

Allowing each business unit to pick its own tooling stack, leading to fragmented skills and duplicated efforts.

-

Ignoring monitoring and observability until bots fail frequently in production and support teams lack visibility.

Step 5: Implement Process Discovery, Prioritization, and Pipeline Management

A strong pipeline of well‑qualified processes is one of the most visible differences between mature CoEs and struggling programs. Organizations that scale RPA successfully invest in structured process discovery using workshops, process mining, and task‑mining tools instead of relying solely on ad‑hoc ideas from individual managers. They also apply transparent prioritization criteria such as volume, rule‑based nature, exception rate, risk profile, and strategic alignment to decide which automations to build first.

Industry case studies show that using process mining not only identifies opportunities but also surfaces root causes of issues that can be solved by both automation and process redesign. This creates a more balanced portfolio where RPA is applied to stable, high‑volume tasks while upstream process fixes remove noise and complexity, improving both bot resilience and business performance.

Best practices and tools:

-

Run discovery workshops with process owners and use standardized assessment forms to capture baseline metrics and automation fit.

-

Apply process‑mining and task‑mining tools to transactional systems to find high‑volume, repetitive patterns and pain points.

-

Maintain a living pipeline or backlog with clear statuses and owners, similar to a product backlog.

Lesson from Deployment 5 (Logistics Leader):

Logistics and supply‑chain case studies describe how leading firms such as global freight and parcel carriers used RPA and agentic automation to handle real‑time shipment tracking, back‑office document management, and customer issue resolution. In one DHL Global Forwarding example, the organization built a virtual delivery center and redeployed around half of the staff in a pilot area to higher‑value work, achieving full ROI within a month and then scaling automation globally. The lesson for CoEs is that a well‑managed pipeline focused on high‑volume, time‑sensitive processes can both fund further investments and create internal momentum.

Action checklist:

-

Define scoring criteria for automation opportunities and apply them consistently.

-

Stand up process‑mining or analytics capabilities to ground decisions in real data.

-

Publish a transparent automation roadmap so stakeholders can see what is in discovery, design, and delivery.

-

Regularly review the pipeline in the steering committee and rebalance across functions.

Common pitfalls to avoid:

-

Accepting every idea into development without structured assessment, leading to low‑value automations and maintenance burden.

-

Focusing only on easy wins and ignoring cross‑functional processes that require coordination but deliver much higher payoff.

-

Treating the pipeline as static rather than revisiting it as business priorities and technologies evolve.

Step 6: Establish Governance, Security, Compliance, and Change Management

Governance and risk management are central reasons many enterprises create RPA CoEs in the first place. Research on RPA governance in banking shows that without clear frameworks, automation initiatives can clash with IT policies, data‑protection rules, and regulatory expectations. CoEs help integrate automation into existing IT and risk structures by defining standards for access control, segregation of duties, audit trails, and incident response.

Regulated sectors such as healthcare and financial services illustrate how robust governance prevents costly missteps. In healthcare, automation case studies emphasize ensuring complete auditability and embedding compliance checks within bots, which has contributed to both cost savings and improved quality outcomes. In banking and insurance, RPA is often used to strengthen controls through detailed audit logs and consistent data handling, which reduces errors and supports regulatory reporting.

Best practices and tools:

-

Involve information security and compliance teams in design standards, platform selection, and review workflows from the outset.

-

Define policies for credentials, including use of secure credential vaults and separation between development and production access.

-

Integrate bots into change‑management processes so that system and process changes trigger impact analysis and regression testing.

Lessons from deployments:

-

The healthcare Automation CoE noted earlier achieved strong ROI while maintaining compliance because it embedded risk and compliance leaders into its CoE steering and review processes rather than treating them as gatekeepers at the end.

-

Banking governance cases highlight that aligning bots with existing IT service‑management frameworks and establishing clear escalation paths greatly reduce outages and unplanned downtime.

Action checklist:

-

Document security, privacy, and compliance requirements for automation and include them in design templates.

-

Ensure bots are covered by monitoring, logging, and incident‑management tools used for other critical applications.

-

Provide change‑management support to help employees understand how automation affects their roles and how they can contribute.

Common pitfalls to avoid:

-

Treating RPA as a “shadow IT” tool outside standard security and change processes.

-

Underestimating the effort to keep bots compliant when regulations or upstream systems change.

-

Failing to communicate clearly with staff about automation goals, which can drive resistance and informal workarounds.

Step 7: Define KPIs, Measure Success, and Enable Continuous Optimization

Measuring automation outcomes is essential for securing ongoing investment and guiding improvement. Automation‑platform vendors and consulting firms recommend defining KPIs before building bots so that changes in cycle time, error rates, hours saved, and cost are measured against a clear baseline. Typical leading indicators include bot utilization, uptime, process coverage, and break‑fix cycles, while lagging indicators include cost savings, revenue uplift, customer‑experience scores, and compliance metrics.

Blueprint and others note that metrics such as average automation uptime and break‑fix hours are often overlooked but are critical to understanding whether an RPA estate is sustainable. Case studies show that programs with fragile bots see significant lost value due to downtime and maintenance, whereas CoEs that track these metrics can identify problematic designs and prioritize refactoring. Some maturity models also encourage tracking mean time to recover, throughput versus backlog growth, and bot‑utilization trends at the portfolio level.

Best practices and tools:

-

Build a dashboard that consolidates operational metrics (runs, success rates, exceptions), business metrics (hours saved, cost impact), and risk or compliance indicators.

-

Use a consistent ROI formula that includes labor savings, error‑cost reduction, and capacity‑avoidance benefits, not just headcount changes.

-

Periodically review metrics in the steering committee and feed lessons into design standards and pipeline decisions.

Action checklist:

-

Define a small set of leading and lagging KPIs for the CoE and each automation.

-

Capture baseline data before go‑live and automate collection wherever possible.

-

Use metrics like bot utilization and uptime to identify underused or fragile automations.

-

Establish a continuous‑improvement cycle where bots are tuned, consolidated, or retired based on data.

Common pitfalls to avoid:

-

Relying on anecdotal success stories instead of quantified results to justify expansion.

-

Measuring only initial savings and ignoring maintenance costs, downtime, and opportunity costs.

-

Failing to align KPIs with strategic objectives defined at the start, which makes it hard to show strategic impact.

Recommended CoE Team Structure and Roles (2026 Edition)

Example CoE Org Structure

Guidance from multiple sources describes a typical RPA or automation CoE structure anchored around several key roles. At the top sits a CoE head or automation director, often reporting to a C‑level sponsor and accountable for overall automation strategy, roadmap, and value delivery. Reporting into this leader are functional leads across discovery, development, governance, change management, and analytics.

An example 2026 org structure can include:

-

CoE Head or Automation Director: Owns strategy, roadmap, funding, and executive communication.

-

Process Analysts and Discovery Leads: Facilitate workshops, run process mining or task mining, and translate business needs into automation candidates.

-

RPA Developers and AI Specialists: Design, build, and maintain automations, including integrating with AI models and agent frameworks.

-

Governance and Compliance Lead: Ensures standards, policies, and risk controls are applied, and coordinates with IT security and audit.

-

Change‑Management and Training Lead: Manages communication, training, and adoption, and supports citizen‑developer programs.

-

Analytics and ROI Measurement Team: Tracks KPIs, builds dashboards, and provides insights on performance and value realization.

-

Citizen‑Developer Enablement Lead: Curates templates, guardrails, and training content for business users building low‑risk automations.

Skill Matrix and Hiring Tips

Across these roles, modern CoEs require a mix of technical, process, and soft skills. Sources highlight the importance of domain knowledge in areas like finance, healthcare, or logistics, alongside skills in scripting, RPA platforms, testing, and secure design. As CoEs move into hyperautomation and agentic AI, new skill categories emerge, including prompt engineering, AI model evaluation, multi‑agent orchestration, and data‑governance expertise.

Hiring guidance from automation consultancies suggests starting with a compact core team of around five to eight people that can cover leadership, architecture, development, and analysis, and then scaling toward 20–50‑plus members as automation spreads across functions. Many organizations also leverage external partners or managed‑service models for specialized skills such as process mining, AI integration, or CoE setup, while building internal capabilities over time.

Scaling the Team Over Time

In early stages, it is common for a small CoE to concentrate on a few high‑impact domains and reuse roles across multiple responsibilities. As the automation footprint grows, teams often add specialized roles such as platform engineers for infrastructure, site‑reliability engineers for bot operations, and dedicated trainers or evangelists for citizen developers. Manufacturing and logistics examples show that when automation reaches hundreds of bots and spans multiple regions, organizations often create virtual delivery centers or hubs that report into the central CoE while focusing on local delivery.

Lessons Learned from 5 Real RPA CoE Deployments

Deployment 1: Global Bank – Fast ROI but Governance Gaps

Banking case studies and governance research show that many large banks achieved quick wins with RPA in areas such as regulatory reporting, KYC, and loan processing, realizing strong early ROI. However, without a robust CoE, these wins sometimes came with governance gaps: inconsistent standards, unclear ownership for bot failures, and friction with IT and risk teams. In one Nordic banking group’s governance case, the bank had to pause parts of its automation program to define a new RPA governance model aligning business and IT around risk and compliance expectations.

Key challenge: Rapid bot growth without clear ownership or risk framework led to audit findings and operational strain.

Solution: Establishment of a central RPA governance structure within a broader automation CoE, with formal roles for IT, operations, and risk, plus standardized approval and monitoring processes.

Result: The bank was able to resume scaling automation with clearer controls, reducing clashes with auditors and avoiding repeated debates over each new bot’s compliance posture.

Lesson: Strong governance and shared ownership must grow in step with ROI; otherwise success can trigger backlash from risk and audit.

Deployment 2: Healthcare Provider – Compliance Success Story

Healthcare RPA case studies show that automation can deliver both financial and clinical benefits when combined with structured governance. In one example, an Automation Center of Excellence working with a healthcare organization targeted high‑impact processes such as revenue‑cycle operations and claims management, resulting in savings of around 3 million dollars and a 6.7 times ROI while maintaining regulatory compliance. Other healthcare RPA initiatives report large reductions in backlog, improved documentation quality, and significant releases of nursing time for direct patient care when RPA is deployed carefully.

Key challenge: Automating sensitive workflows without compromising compliance or data privacy.

Solution: Creation of an Automation CoE that embedded compliance and business leaders in governance, used rigorous intake assessments, and ensured bots produced complete audit trails.

Result: High financial returns, reduced backlogs, and improved reporting quality, all achieved without major compliance incidents.

Lesson: In regulated sectors, CoEs that treat compliance as a design constraint and involve regulators or auditors early unlock more sustainable value.

Deployment 3: Manufacturing Company – Scaling Challenges

Manufacturing and industrial automation CoE stories illustrate how RPA CoEs face new challenges once bot counts exceed a few hundred. An automotive supplier described as having a mature RPA CoE with more than 300 bots still found that its operating model and skill mix were not ready for agentic automation, multi‑platform environments, and complex plant‑floor integrations. Other manufacturing RPA cases highlight difficulties in maintaining bots across legacy systems and variable processes when governance and observability are limited.

Key challenge: Scaling from dozens to hundreds of bots while preparing for agentic AI and without overwhelming support teams.

Solution: Evolving the CoE into a broader automation operating model, emphasizing systems thinking, domain expertise, and data literacy, and adopting platform‑agnostic practices.

Result: Better alignment between automation and manufacturing objectives, improved ability to integrate agentic capabilities, and more sustainable support structures.

Lesson: As automation estates grow, CoEs must shift focus from volume of bots delivered to quality, resilience, and strategic fit.

Deployment 4: Insurance Firm – Integration with Process Mining

Insurance organizations have used process mining together with RPA CoEs to improve both customer experience and cost efficiency. One blinded life‑insurance case study describes how a mid‑sized insurer worked with a center of excellence and a prioritization committee to assess and select RPA projects using structured tools. Another insurance example using process mining technology showed that mining customer‑care and payment‑notification journeys revealed root causes of high inquiry volumes, leading to targeted automation and communication improvements.

Key challenge: High process complexity and fragmented data made it difficult to know where automation would actually improve outcomes.

Solution: Use of process‑mining platforms in combination with a CoE‑driven prioritization approach to pinpoint high‑impact process variants and design automations that addressed underlying issues.

Result: Multi‑million‑dollar annual cost savings, higher customer satisfaction, and a pipeline of further automation opportunities with clear business cases.

Lesson: CoEs that treat process mining and analytics as first‑class capabilities build healthier pipelines and avoid automating symptoms instead of causes.

Deployment 5: Logistics Leader – Agentic AI Integration

Logistics case studies show how RPA and AI are being combined in 2025–2026 to create more autonomous operations. One DHL Global Forwarding story describes creating an RPA‑enabled virtual delivery center that quickly achieved ROI, improved supply‑chain transparency, and redeployed staff to higher‑value work. Other logistics and CPG examples describe Automation CoEs where agentic AI orchestrates RPA bots to collate real‑time sales or shipment data and provide tailored guidance for field staff.

Key challenge: Integrating RPA with AI‑driven decisioning and real‑time data while maintaining reliability and control.

Solution: Evolving the CoE into an automation or hyperautomation CoE with clear ownership of both RPA and AI agents, standardizing patterns for how agents trigger bots, and monitoring overall outcomes.

Result: Faster responses to shipment or demand changes, reduced manual effort in back‑office logistics tasks, and better customer‑service metrics.

Lesson: Agentic AI adds value when CoEs define clear guardrails and integration patterns; without that, AI‑driven automations risk becoming yet another siloed technology.

Critical Success Metrics and ROI Measurement for RPA CoEs

Leading and Lagging KPIs

Leading indicators help CoEs understand whether their automation program is healthy day to day, while lagging indicators show ultimate business impact. Sources recommend tracking metrics such as number of automated processes, bot utilization, average automation uptime, exception rates, break‑fix cycles, and mean time to recover for operational health. For business impact, common KPIs include hours saved, cost savings or avoidance, error‑rate reductions, throughput improvements, customer‑experience scores, and compliance findings.

Bot utilization measures how often bots run and whether they leverage 24/7 potential, while uptime tracks the percentage of time bots are available to run, with studies citing average uptime around 92 percent but highlighting that downtime across large portfolios can translate into millions in lost value. Automation‑maturity resources also recommend monitoring pipeline metrics such as throughput versus backlog growth to see whether delivery capacity keeps up with demand.

Recommended Dashboard Metrics

Automation vendors and consultancies suggest consolidating CoE metrics into dashboards accessible to both technical and business stakeholders. A practical CoE dashboard might include:

-

Portfolio overview: number of live automations, by function and type.

-

Operational health: success rates, exceptions per run, average run time, uptime, break‑fix cycles, and mean time to recover.

-

Business impact: hours saved, cost savings, revenue impact, SLAs met, and customer‑experience indicators such as NPS or complaint volumes.

-

Risk and compliance: audit findings related to bots, incidents involving data privacy or security, and adherence to control standards.

ROI Calculation and Examples

Guidance on automation ROI stresses defining calculation methods in advance and including both direct and indirect benefits. A simple approach estimates labor savings by multiplying transaction volumes by time saved per transaction and then by an appropriate labor rate, and then adds error‑cost reductions and capacity‑avoidance benefits where applicable. Studies show that many RPA programs achieve ROI between 30 and 200 percent in year one, with some large‑scale or well‑targeted programs reporting returns above 300 percent when measured over several years.

Healthcare and insurance CoE case studies provide concrete examples. One healthcare Automation CoE reported about 3 million dollars in savings and a 6.7 times ROI through carefully selected automations in revenue cycle and shared services. A banking regulatory‑reporting implementation has been cited as delivering ROI exceeding 300 percent in the first year by reducing manual work and errors across complex reporting processes.

Maturity Model Overview

Automation‑maturity models describe a progression from pilot projects to fully integrated hyperautomation CoEs. A representative model can include:

-

Level 1 – Pilot: A few bots in isolated areas, limited governance, basic metrics focused on local savings.

-

Level 2 – Structured Program: Formalized CoE team, standard toolset, defined development standards, and consistent ROI tracking.

-

Level 3 – Enterprise Scale: Dozens of processes automated across functions, integrated pipeline, stronger governance, and dashboards visible to stakeholders.

-

Level 4 – Intelligent Automation CoE: Integration with process mining, AI, and advanced analytics; focus on end‑to‑end journeys rather than tasks.

-

Level 5 – Hyperautomation Center of Excellence: Orchestrated ecosystem of RPA, AI agents, and human workers with continuous optimization and strong business‑strategy alignment.

Common Pitfalls and How to Avoid Them

Analysts and practitioners highlight recurring reasons why RPA programs and CoEs fail to deliver expected value. Common pitfalls include poor process selection, lack of executive sponsorship, weak governance, skill gaps, underinvestment in change management, fragmented tooling, and inadequate monitoring. Many of these issues are visible in failed scaling attempts where bots proliferate without clear ownership, leading to high maintenance costs and stakeholder fatigue.

Mitigation strategies drawn from successful deployments include:

-

Investing early in governance structures that balance speed and control, with clear roles for IT, business, and risk.

-

Using structured discovery and prioritization, often with process mining, to avoid low‑value or unstable candidates.

-

Building a balanced team that includes process and change‑management skills, not only technical developers.

-

Standardizing tooling and development practices to reduce break‑fix incidents and downtime.

-

Communicating openly about automation goals and providing reskilling pathways so employees see opportunities rather than only threats.

Real‑world cases show that when CoEs act as partners to the business rather than as gatekeepers, adoption and results improve, but they must also retain enough authority to prevent fragmentation and risk exposure.

Templates, Checklists, and Tools You Can Use

RPA and automation CoE resources consistently recommend using lightweight templates and checklists to drive consistency and speed. While exact documents vary, common artifacts include CoE charters, process‑assessment forms, governance policy checklists, ROI trackers, and skills inventories for the team and citizen developers. Many organizations build these using office‑productivity or collaboration tools they already have, integrating them into portals or ticketing systems for ease of use.

Examples of templates and tools suggested by industry guidance include:

-

CoE Charter Template: Sections covering vision, scope, operating model, decision rights, funding, and success metrics.

-

Process‑Prioritization Matrix: A scoring sheet that rates candidate processes on volume, stability, rule‑based nature, exception levels, regulatory impact, and strategic fit.

-

Governance‑Policy Checklist: Items such as security controls, access management, logging, testing, and documentation requirements for each automation.

-

ROI‑Tracker Dashboard: A regularly updated view of hours saved, financial impact, and non‑financial benefits for each bot and domain.

-

Team‑Skills Assessment Sheet: A matrix mapping required competencies against current staff capabilities and training plans.

For tooling, process‑mining platforms like UiPath Process Mining and Celonis are frequently highlighted for pipeline discovery, while collaboration suites such as Microsoft Teams and SharePoint or custom portals serve as CoE hubs for documentation and requests. Some organizations also integrate their CoE workflows into IT service‑management tools so automation work is tracked alongside other technology initiatives.

Future‑Proofing Your RPA CoE for 2027–2028

Looking ahead, hyperautomation and agentic AI are expected to further transform how automation CoEs operate. Process‑excellence research forecasts that agentic AI will increasingly monitor processes, propose optimizations, and coordinate actions across bots and humans, shifting process management from project‑based initiatives to always‑on optimization. Industrial and AI‑advisory reports describe agentic AI as the next frontier beyond traditional RPA, enabling more autonomous and adaptive decision‑making but also requiring new governance frameworks.

To prepare, CoEs can start by designing for interoperability and modularity, ensuring that their automation stack can integrate AI agents, new data sources, and orchestration tools without disruptive replatforming. They can also extend their charters to cover AI‑governance policies, outcome‑focused monitoring, and multi‑agent orchestration patterns so that citizen developers and business units can safely experiment within guardrails. Over time, many RPA CoEs are likely to evolve into broader intelligent‑automation or hyperautomation CoEs where the emphasis is less on specific tools and more on managing an ecosystem of digital workers.

Conclusion

Industry evidence and case studies show that a well‑run RPA CoE can turn automation from a scattered set of scripts into a strategic capability that consistently delivers higher ROI, better compliance, and more resilient operations. In 2026, success requires going beyond basic RPA governance to an automation operating model that integrates AI, process mining, and orchestration while maintaining strong guardrails. The seven‑step framework outlined here—covering sponsorship, operating model, team design, technology standards, pipeline management, governance, and metrics—provides a practical roadmap for building or refreshing such a CoE.

Organizations that act now can position their CoEs to handle the coming surge of AI agents, citizen developers, and multi‑agent workflows, turning automation into a durable competitive advantage rather than a one‑off cost‑reduction exercise. Those that delay risk being stuck with fragile bots, siloed experiments, and mounting technical and operational debt as automation and AI become more deeply embedded in how work gets done.

FAQs

How long does it take to build a mature RPA CoE?

Sources indicate that moving from pilot to a structured CoE with standardized governance typically takes six to eighteen months, depending on organizational size and starting point. Reaching enterprise‑scale maturity with dozens of automations and integrated AI and process mining capabilities usually unfolds over several years, often in parallel with broader digital‑transformation efforts.

What is the ideal team size for an RPA CoE?

Guidance suggests starting with a compact core team of about five to eight people who can cover leadership, architecture, development, and analysis responsibilities. As automation permeates more functions, mature CoEs in large enterprises may grow to twenty to fifty or more specialized roles, sometimes supplemented by virtual delivery centers or managed partners.

Can small and mid‑sized businesses build a CoE, or should they use a different model?

Experts note that while smaller organizations may not need a large formal CoE, they still benefit from centralizing standards, tool ownership, and governance, even if this is a virtual or part‑time team. Some SMBs instead rely on external service providers or shared CoE‑as‑a‑service models for tooling, best practices, and support while keeping a small internal coordination group.

How do you measure RPA CoE success?

Success is typically measured through a combination of financial metrics such as ROI, cost savings, and payback periods, and operational metrics such as automation coverage, uptime, exception rates, and mean time to recover. Leading CoEs also track softer outcomes such as employee‑experience improvements, audit results, and contributions to strategic objectives like growth or customer‑experience differentiation.

How important is process mining for a modern CoE?

Process mining is increasingly viewed as a critical accelerant for CoEs because it provides objective insight into process variants, bottlenecks, and volumes, which improves automation prioritization and design. Insurance and other case studies show that combining process mining with CoE governance can unlock substantial savings and customer‑experience gains by targeting root causes rather than superficial symptoms.

Do you need agentic AI to justify building or upgrading a CoE in 2026?

Current guidance suggests that while agentic AI is not a prerequisite for establishing a CoE, designing a CoE that can accommodate AI agents and evolving technologies is prudent. Organizations focusing only on classic RPA may find it harder to integrate future capabilities if their CoE is too narrowly defined or tightly coupled to a single platform.

What role do citizen developers play in an RPA CoE?

Citizen developers can significantly extend automation coverage when supported by training, templates, and guardrails defined by the CoE. Successful programs clearly delineate which kinds of automations business users can build, set security and data‑access constraints, and provide central review for higher‑risk or cross‑system workflows.

How do you keep an RPA CoE from becoming a bureaucratic bottleneck?

Practitioners recommend keeping the CoE lean and service‑oriented, using standard templates and clear SLAs for reviews and support rather than ad‑hoc approvals. Federated or hybrid operating models, where business‑unit teams develop under shared standards, can also maintain speed while preserving coherence.